AI claims are everywhere in enterprise software. Incentive compensation management (ICM), and the broader sales performance management landscape, are no exception. Nearly every platform now says it has AI, but that label tells buyers almost nothing. The real question is not whether AI was added. It is whether the system was built in a way that AI can actually interpret, operate on, and extend safely.

That distinction matters because AI adoption and AI value are not the same thing. AI usage is rising fast across the enterprise, but measurable business impact still lags. In fact:

- 78% of organizations reported using AI in 2024, up from 55% the year before (source).

- And, more than 80% of respondents said their organizations were not seeing tangible enterprise-level EBIT impact from generative AI (source).

That gap explains why so many products look strong in a demo and fall apart in production. In most cases, the issue is not the model. It is the architecture underneath it.

For ICM buyers, therein lies the line between AI-integrated and AI-native. AI-integrated software adds a layer on top of a system that still depends on manual configuration, buried logic, and human translation. AI-native software is different. It gives AI structured context, governed logic, and a system it can work within reliably in real time.

In a category where accuracy, auditability, and control matter, wrappers are simply not enough.

Why AI-Integrated ICM Falls Short

The problem with most AI-integrated ICM platforms is not the interface. It is the architecture underneath it. When the system still depends on manual configuration, buried logic, and human translation, AI can only sit on top of the problem, not solve it.

Legacy ICM was built for manual configuration, not machine interpretation

Legacy ICM systems were built for administrators, consultants, and analysts to configure manually over time. In practice, that means logic often ends up buried across custom rules, SQL, spreadsheets, implementation notes, and specialist knowledge instead of living in one governed system of record.

That makes even routine changes slower and riskier than they should be. Humans have to trace dependencies by hand, and machines have even less to work with. When the underlying logic is fragmented and poorly exposed, AI cannot reason over it safely. It can only sit on top of it.

Adding a chatbot does not change the underlying system

A chatbot is an interface, not an architecture. Adding a prompt layer on top of a legacy ICM platform may make the product look more modern, but it doesn’t change how the system stores logic, handles dependencies, or supports change.

This distinction matters. If the underlying system is still brittle, services-heavy, and difficult to interpret, AI does not make it more governable, adaptable, or machine-readable. It just gives users a new way to interact with the same structural limitations. That’s why “AI-integrated” is often a surface claim. Unless the logic model underneath the product changed, the architecture did not.

High-stakes financial workflows cannot run on black-box reasoning

In sales compensation, plausible is not good enough. If an AI system can’t show how it reached an output, what data it relied on, or where uncertainty exists, it doesn’t belong anywhere near payout logic.

And that standard isn’t just theoretical. CFOs, RevOps leaders, and audit stakeholders need systems they can verify, explain, and defend. When outputs affect earnings, trust, and compliance, weak grounding and low explainability are not minor issues. They are disqualifiers.

The Shift: From Manual Configuration to Machine-Readable Logic

This next phase of ICM is not about layering AI onto the same legacy foundation. It is about changing how compensation logic is represented inside the system. Machine-readable compensation logic means compensation plans, rules, entities, dependencies, and exceptions are structured in a way software can interpret reliably, instead of leaving critical meaning buried under manual setup, custom workarounds, and specialist knowledge.

This shift in thinking is what makes useful AI possible. When the logic is explicit and governed, AI can work within the system with more accuracy, control, consistency, and a greater ability to reduce errors. That is the real change in the market: moving from software that merely includes AI features to systems built for machine interpretation from the very beginning.

Introducing, AI-Native ICM

AI-native ICM is not defined by chatbots, assistants, embedded models, or other AI tools. As we’ve discussed, those are merely interfaces. They may improve access or usability, but they don’t determine whether a system is architecturally prepared for AI.

What defines AI-native ICM is the structure underneath the interface. The system must be built around machine-interpretable architecture, governed context, and structured logic that software can read, reason over, and extend reliably at enterprise scale. In practice, that means compensation logic is not hidden across custom rules, disconnected artifacts, and specialist knowledge. It’s represented in a way the system can interpret with control, traceability, and consistency.

This is the clear line separating AI-integrated and AI-native. AI-integrated platforms add AI to systems that still rely on legacy operating assumptions. AI-native platforms are built so AI can function inside the architecture itself. That distinction matters because it turns the category from a marketing claim into a durable technical and operational one.

The Architectural Requirements of AI-Native ICM

Defining the category is only the first step. To make AI-native ICM credible, we need to be specific about the structural requirements that separate a durable architecture from a surface-level claim.

A contextual layer that brings awareness to AI-governed systems

AI cannot work reliably from field names, labels, and loose configuration data alone. It needs structured context that tells the system what each object represents, how it connects to other components, what logic governs it, and where the boundaries are. Without that layer, AI can describe what it sees, but it can’t support AI agents or other system-level reasoning with enough precision for enterprise compensation.

This is where the contextual layer comes into play. The contextual layer provides the semantic foundation that defines what a rule is, how a plan component relates to another component, what changes occurred between versions, which dependencies are upstream or downstream, and what constraints apply before anything can be modified or executed. Instead of forcing AI to infer meaning from fragmented inputs, the system gives it governed context up front.

This changes the role AI can play. It moves from narration to reasoning. The model is no longer summarizing configuration screens or guessing at intent from partial information. It can evaluate relationships, interpret changes in context, and operate within clear structural boundaries. In ICM, the contextual layer is the difference between an assistant that sounds helpful and a system that can support real plan building, change analysis, and controlled execution.

A component library that reduces ambiguity and increases reusability

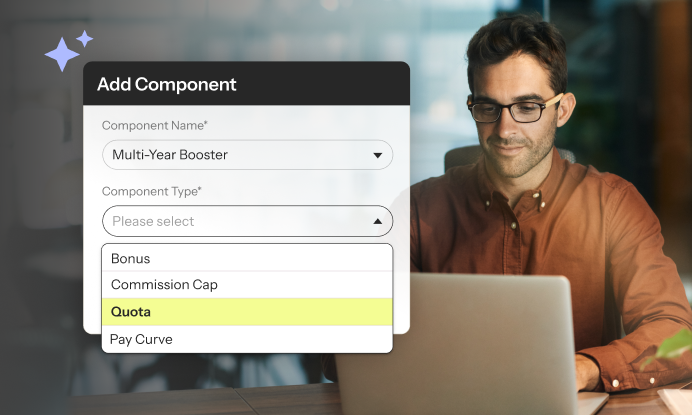

A machine-readable system still needs consistent building blocks. This is where a component library matters. When common compensation logic is captured as reusable, structured components, teams no longer have to rebuild the same mechanics from scratch every time a plan changes.

That has practical benefits for both people and systems. Operators can assemble and adjust plans faster, with more consistency and less dependence on one-off logic. AI gets a clearer set of objects to work with instead of trying to interpret bespoke builds, inconsistent naming, and repeated variations of the same rule. The result is less ambiguity, stronger governance, and a much shorter path from plan change to plan execution.

Over time, that model scales better than custom configuration. It creates consistency across plans, makes iteration faster, and reduces the operational drag that usually comes with growth and complexity.

A governed execution model that preserves trust

Even well-grounded AI needs control points. In enterprise ICM, outputs must be reviewable, changes must be versioned, and decisions must be traceable back to the logic and data behind them. Without those controls, speed comes at the expense of trust.

AI-native doesn’t mean uncontrolled automation. It means intelligent execution inside a governed system. Constraints, approvals, audit trails, and version history are not secondary features. They are what make AI usable in a category where accuracy, accountability, and enterprise credibility matter.

From Prompts to Plans

Most of the market still talks about AI through the lens of prompts: ask a question, get an answer, move on. That framing makes sense for general-purpose assistants, but it is too limited for enterprise ICM. Compensation teams don’t just need better answers. They need a faster, more reliable way to turn business changes, including shifts in sales strategies, into governed system changes.

This is where Intent-to-Plan comes into play. Intent-to-Plan is the shift from manually translating a compensation request into system logic to expressing the business intent and having the platform generate an AI-assisted, structured, reviewable plan proposal. Instead of starting with tickets, custom configurations, and specialist interpretations, the process starts with the desired outcome and moves directly toward scenario modeling and a versioned plan design.

This matters because the real bottleneck in ICM is not asking questions. It’s operational translation. Every plan change has to move from business language and related inputs like forecasting or capacity planning into rules, dependencies, constraints, and execution logic. In legacy systems, that work is slow, manual, and dependent on a small group of experts. In an AI-native system, that path gets much shorter. Teams can iterate faster, rely less on specialists, and move from business change to system change with more speed and control.

Why This Matters to Enterprise Teams

The architectural differences between AI-integrated and AI-native ICM are not abstract. They shape how enterprise teams manage risk, absorb change, and maintain control as compensation complexity grows.

For finance leaders, this is about control and defensibility

For finance leaders, the value of AI-native ICM is not novelty. It is the ability to move faster without weakening control. When compensation logic is structured, governed, and traceable, teams can adapt plans, model changes, and execute updates with a clearer line back to the rules, data, and approvals behind them.

That matters because system design is a risk decision. If logic is hard to interpret, difficult to audit, or dependent on specialist intervention, every change introduces more exposure. AI-native architecture reduces that risk by making accuracy, governance, and financial integrity part of how the system operates, not something teams have to bolt on after the fact.

For RevOps teams, this is about ownership and adaptability

For RevOps and sales compensation teams, the issue with AI-integrated systems is operational leverage. Legacy systems create too much dependence on manual work, specialist knowledge, and external services just to keep plans current. As complexity grows, internal teams spend more time translating, troubleshooting, and coordinating changes than actually managing the business.

AI-native architecture changes that model. When logic is structured and machine-interpretable, teams can adapt plans, make updates, and respond quickly without triggering a rebuild. That gives operators more direct control over the system, reduces hidden complexity, and keeps ownership closer to the people responsible for outcomes.

For analysts, this is a category shift, not a trendy feature

For analysts, AI-native ICM should be evaluated as an architectural distinction, not a new layer of product packaging. The meaningful question is not, “Who added an assistant first?” It’s, “Which platforms were built with the structured logic, governed context, and system design needed to support intelligent execution in enterprise compensation?”

That creates a cleaner way to assess vendor claims. Instead of comparing AI features in isolation, analysts can evaluate whether the underlying architecture actually changed. That makes AI-native ICM a more durable market shift than a temporary feature trend because it reflects a deeper evolution in how enterprise systems are designed.

Variabl is the only AI-Native ICM in the Market

Variabl was built intentionally to meet all of the requirements of AI-Native ICM architecture. Structured components, governed context, versioned logic, and enterprise control are not supporting details. They are part of our foundation.

Variabl was built for continuous change and machine interpretation, not retrofitted onto assumptions from an earlier generation of ICM. This is not a story about layering AI onto brittle infrastructure. It’s a story about building a system that intelligence can operate inside of with the structure, traceability, and control enterprise teams require.

The Future of ICM is Already Here

The market isn’t short on AI claims. It’s short on architectural clarity. Too many vendors are treating AI as a presentation layer problem, which is why buyers keep getting polished demos without a meaningful change in how the system actually works.

At Variabl, we believe that’s the wrong standard. In enterprise ICM, the question isn’t whether a platform can generate a fluent response. It is whether the system can interpret compensation logic safely, operate within clear constraints, and support change without creating more risk. That’s the line that matters.

The Variabl point of view is this: Integrated isn’t enough. A wrapper may change the interface, but it doesn’t change the architecture underneath it. And in a category where accuracy, control, and trust matter, that difference is everything.