Enterprise fintech has an AI problem, and it’s probably not what you’d expect. Here it is: AI enthusiasm dramatically outpaces real deployment.

Across industries, and especially within the financial services industry, executives are clear about the strategic importance of artificial intelligence and related AI technologies. Boardrooms talk about it constantly. Product roadmaps prioritize it. Investor decks highlight it as a central pillar of future growth.

Yet, when you look past the surface and into actual day-to-day operations, the picture looks very different. Executives want AI, but they’re not actually using it as much as you’d think. Consider this statistics:

- 79% of global executives report having some familiarity with generative AI but only 22% report using it regularly in their work, and even fewer (7%) report fully scaling AI across workflows (source).

- 79% of organizations report running AI pilots or limited deployments. Only about 25% to 30% report scaling AI across multiple business units or enterprise workflows (source).

And this gap is not driven by lack of investment. Organizations are pouring resources into AI initiatives at an unprecedented rate, shaping long-term investment strategies. Industry research projects global AI spending to reach hundreds of billions annually over the next several years (source).

Capital, talent, and infrastructure are all flowing toward the technology. And yet, despite that momentum, many AI initiatives never make it past the experimentation.

A Recognizable Adoption Pattern in Enterprise

For people building products in the enterprise financial technology space, this adoption gap is not surprising. The same pattern appears with almost every major technology shift.

Innovation enthusiasm tends to peak during demos, not deployment. In controlled environments (clean datasets, ideal configurations, clear use cases) new capabilities look compelling. Stakeholders see faster workflows, cleaner outputs, fewer manual steps, and improved operational efficiency.

But, once the system is connected to real infrastructure and real financial processes, the environment changes. Data is inconsistent. Integrations behave unpredictably. Edge cases appear quickly. What looked seamless in a demo suddenly becomes much harder to operationalize.

Operational friction kills adoption faster than bad technology. In fact, as many as 85% of AI projects fail to deliver their expected outcomes, often because organizations struggle with data readiness, system integration, and operational workflows rather than limitations in the underlying AI models (source).

Financial systems add another layer of skepticism. Unlike consumer AI tools, fintech platforms operate directly inside high-stakes workflows– financial reporting, incentive compensation payouts, regulatory compliance, risk management, and systems that ultimately feed into broader financial markets. Even small errors can have material consequences.

As a result, finance leaders evaluate automation differently than most software users. Before a system can be trusted, it must prove not only that it works, but that its outputs can be understood, verified, and explained in the context of real financial decisions and risk assessment.

To put it simply, AI adoption in fintech isn’t a technology problem– it’s a trust problem.

The Trust Barrier: Why AI Fails in High-Stakes Workflows

In fintech, adoption isn’t about whether something works– it’s about whether it holds up under scrutiny.

Finance leaders are trained to minimize risk, not experiment with it.

In fact, only 14% of CFOs completely trust artificial intelligence technology to deliver accurate accounting data on its own (source). The hesitation isn’t cultural, it’s structural. Every output that touches financial reporting, compensation, or compliance needs to be defensible.

That’s where many AI systems break down. It’s not that they produce the wrong answer– it’s that they can’t clearly show how they arrived at it. In an environment where auditability matters, black-box outputs create immediate resistance.

And the stakes change everything. The tolerance for error in financial workflows is effectively zero. A model that gets something mostly right isn’t useful if it impacts payroll, commission pay, or reporting.

Until AI systems can meet that standard– clear, explainable, and verifiable– they won’t be adopted in the workflows that matter most.

AI Fails in Fintech Because It Starts at the End

The issue isn’t just that financial workflows require trust. It’s that we introduce AI at the exact point where trust is hardest to earn.

Most AI efforts in fintech focus on final outputs such as commission calculations, forecasts, payouts, and reporting. The assumption is straightforward. These are complex, structured problems, so they should benefit most from automation and AI.

But these outputs sit at the very end of major workflows. This is where everything becomes real. Numbers turn into payments, reports, and decisions that carry financial and regulatory consequences.

That placement changes the adoption dynamic entirely.

Instead of easing AI into the workflow, we ask it to operate at the point of maximum scrutiny on day one. Before it has built trust. Before users have context. Before there is any tolerance for ambiguity. So even if the system works, it faces the highest possible bar immediately.

That is the real disconnect. AI is not failing in these environments because the problems are too complex. It is failing because we are starting at the only place organizations are unwilling to compromise or take risks with.

AI Gets Adopted When it Preserves Human Control

There is a simpler explanation for why some systems get adopted and others do not. People trust systems when they can see the decision making process and intervene if necessary.

In enterprise environments, especially in finance, users are not looking to hand over control. They are responsible for the outcome. If something goes wrong, they’re the ones held accountable. So any system that removes the ability to validate, adjust, or override decisions immediately creates resistance.

This is where many AI implementations miss the mark. They aim to automate decisions end to end, when users actually want acceleration and efficiency without losing control.

And, get this, it’s not just that users want to retain control. Research even shows that AI is more effective when it complements human capabilities rather than replacing them, emphasizing augmentation over substitution (source).

The systems that succeed are not the ones that eliminate human involvement. They are the ones that make humans faster, more accurate, and more confident in the decisions they are already responsible for.

The Shift: Where AI Actually Works

If adoption depends on trust, control, and placement, then the implication is straightforward.

AI shouldn’t start at the point of decision. It should start at the point of friction.

Instead of trying to automate final outcomes, the focus must shift to the work that leads up to them. The setup, the configuration, the data structuring, the integration work that slows teams down but does not carry the same level of risk.

This is where AI has a different adoption profile. The outputs are still important, but they are not final. They can be reviewed, adjusted, and validated before anything moves downstream. That changes the expectation from perfection to usefulness.

Under this model, AI is not responsible for the answer. It is responsible for getting you to a usable starting point much faster. That distinction matters.

Because once AI is positioned as a way to accelerate progress instead of replacing judgment, the resistance starts to fade. The system is no longer asking for trust upfront. It earns it by leveraging AI to make the work easier.

What “Good” AI Looks Like In Fintech

If this shift is real, it should show up in how the system behaves on day one. In practice, good looks like:

- Starting with a structured commission model or plan, not a blank slate.

- Auto-generating plan components and AI-driven logic from existing data.

- Identifying data relationships across CRM, ERP, and payroll without manual mapping.

- Flagging errors with a clear root cause and suggested fixes.

- Producing outputs that can be reviewed and adjusted before anything is finalized.

How AI shows up in Variabl specifically:

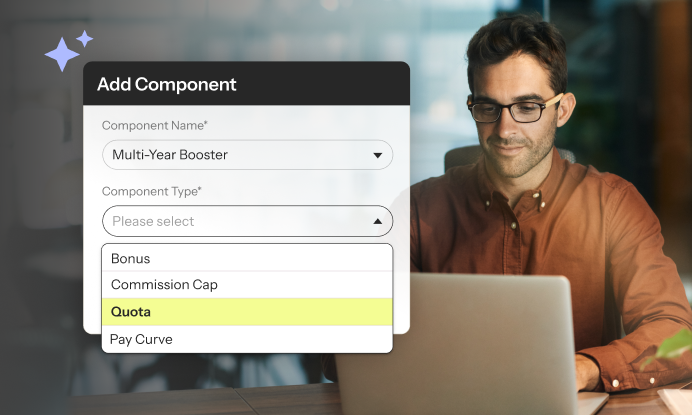

- AI-assisted plan configuration using effective-dated rules and reusable components.

- Automated data structuring across integrated systems with a no-code data manager (and SQL when preferred/needed).

- Guided query generation and transformation for complex compensation logic.

- Built-in validation, audit trails, and versioning to support reviews and approvals.

- Workflow support for approvals, disputes, and statement distribution.

Critically, none of these applications replace ownership. Finance and RevOps teams still review, adjust, and approve everything before it’s finalized. The system produces a usable starting point, not a locked outcome. That preserves auditability and control while removing the slowest parts of the process.

The impact is immediate. Instead of spending weeks getting a system into a usable state, teams can move from raw data to a configured plan in a fraction of the time. Not because decisions are automated, but because the path to getting there is no longer manual.

The Lesson for Enterprise Builders and AI Adopters in Finance

Enterprise teams do not adopt AI all at once. They adopt it one trusted use case at a time.

The turning point is rarely a big strategic decision. It is a small operational moment where the system saves meaningful resources, produces a usable result in real time, and makes it easy to verify what happened. That is what changes behavior.

In fintech, that matters more than ambition. Teams do not need AI to do everything. They need it to do something useful, clearly, and under the right level of oversight. Once that happens consistently, usage expands. The system moves from interesting, to helpful, to part of the workflow.

That is the constraint most teams miss. Adoption is not driven by how advanced the system is. It is driven by how quickly it can prove value in a way users can understand, validate, and repeat.

The Difference Between AI That Gets Demoed and AI That Gets Used

Everyone wants AI in fintech. Very few teams actually use it in the workflows that matter.

After building in this space for years, that gap is not surprising. The technology isn’t the constraint. The way it’s applied is.

The systems that get demoed are optimized for capability. They try to automate the most complex, highest-stakes decisions from day one. They look impressive. They check the box. And then they get turned off.

The systems that get used look different. They show up earlier. They reduce friction. They make the work easier without taking control away from the people responsible for the outcome. They earn their place in the workflow instead of demanding it.

That is the lens we are using to build sales compensation software at Variabl.

- We are not trying to replace judgment. We are trying to accelerate it.

- We are not starting at the final answer. We are starting at the work that leads up to it.

- We are not asking for trust upfront. We are designing a platform that earns trust, every step of the way.

Because in enterprise fintech, adoption is the only thing that matters. And the difference between AI that gets adopted and AI that does not is simple: One tries to take over the work. The other makes the work better. Only one of those survives.